Pretraining Economics for Distributed Multi-Node LLM Training, and a justification for Cluster Resiliency

How do we systematically evaluate compute cluster costs, and design an effective system for fault-tolerant LLM pretrains?

The following article stems from a bridged talk that I gave at an internal paper-reading session at Zyphra Technologies on cluster resiliency, and the Aegis system. As the talk was quite general, I believe there’s quite a bit of insight available for prospective labs looking to feel more certain about an upcoming round of pre-training.

For a non-trivial duration, I have dedicated an extremely significant amount time and resources to leading as a majority stakeholder — designing, planning, and implementing — the Aegis cluster resiliency system at Zyphra, building up our infrastructure completely from scratch. Over this period, I’ve gained intuition about common pre-training failures with using new AMD hardware — along with their relative frequencies. We do touch upon this in our Zaya-1 paper, so I’m going to try to build upon that information as opposed to starting from scratch.

I’ll answer the following questions:

How should AI labs calculate the total costs of running a compute cluster, given that we wish to pre-train a model for a certain number of tokens?

The actual calculation

Practical reality constraints: training efficiency, along with some good priors

Related question: how do we estimate the opportunity cost for a failure?

Under what specific constraints should a lab consider investing in cluster resiliency? What are the practical realities of development?

The irritating lack of literature, some good papers, and a good north star paper to shoot for. How do the failure frequencies translate to the AMD stack?

1 - 2 sentences on good first steps towards home-growing a cluster resiliency system.

Pretrain Time Economics

Ideal Costs

Let’s walk through how we can estimate the costs of pretraining a large language model (LLM). The calculation can be easily adapted for vision, audio, and other large scale foundational models by tweaking the conversion factor.

Say we want to train a model for 10 trillion (10T) tokens, which is pretty reasonable for a 8B parameter model. Additionally, assume that we are given a compute cluster with 1024 GPUs. Finally, assume that the per-hour rate we’re given for reserved MI300Xs is $1.99 / hr.

A critical thing you want to capture from ablations before kicking off a pretraining is what the iteration time (abbv. iter time) per training step — consisting of your forward step, backward step, and optimizer sync — would be around. In general, capturing this number is good practice because a pretraining-focused performance engineering team would then try use this number as a baseline for speedups.

Suppose our iter time is 13 seconds for a global batch size of 2048 sample, and that our sequence length per sample is 65,536. Our ideal training duration assuming zero failures can be calculated as such:

train time (in seconds) =

(iteration time (s) / iter) * (1 iter / global batch size samples) *

(1 sample / sequence length tokens) * (total tokens to train on)This formula basically just assumes a standard next-token-prediction

Note the global batch size (GBS), we don’t really factor the micro batch size (MBS) here. Tuning the MBS to saturate a single GPU would automatically alter your GBS. If you are not actively leveraging data parallelism, then this is probably just “batch size”.

In this case, our ideal training duration is as follows:

train time (in seconds) =

13 * (1 / 2048) *

(1 / 65536) * (10 * 10^12) ~ 968575 secondsThat’s approximately 968575 seconds * (1 hour / 3600 seconds) = 269 hours!!

Now, our cost of GPUs per hour is

total cost / hour = number of GPUs reserved * cost / hour of a single GPUi.e, $1.99 * 1024 = $2037.76 / hour. So the total cost is

269 hours * $ 2037.76 / hour = $548,157.44Glancing briefly, these numbers seem to check out with those from Smol-LM training playbook. But this is assuming zero failures. What is the opportunity cost per failure? We can quantify this simply from the number of hours the GPU cluster was idling.

In this scenario, the failure cost is $2037 / hour.

Now, you’d also need to take into account the experience levels of your team. To easily estimate this, let’s just define an training efficiency coefficient, e, as an estimate to the total percent of hours that are actively used to perform forward and backward steps. Even with extremely experienced, competent stewards, a first training run’s efficiency is reasonably capped at 0.6.

So our effective training hours becomes 269 / 0.6 ~ 448 hours. A realistic forecasted cost for this pretrain, accounting for practical realities, is

448 hours * $ 2037.76 / hour = $913,595.73Nearly a million dollars. How do we drive this cost down? While a performance engineering team may mess around with driving iter time down, assume for a worst case that it will stay relatively the same. So the easiest way to drive down costs is to boost our training efficiency coefficient. This is what cluster resiliency seeks to do.

Failures, and practical reality

Most hardware companies use NVIDIA. However, Zyphra has partnered with AMD and IBM and uses a training cluster of MI300Xs. We do see some similar failures as what NVIDIA peeps see, but there are differences. I’ll go over those, but first need to lay down some terminology.

I’ll adopt terminology from the ByteRobust cluster resiliency paper, which I see as a great north star to look towards with results that I can corroborate to some extent.

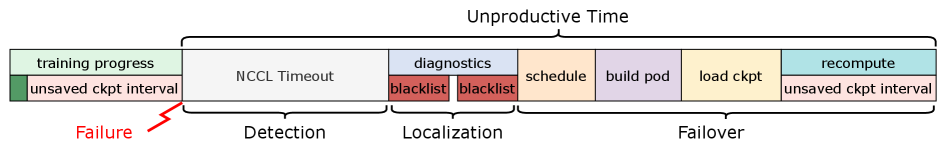

We should assume that failures happen at least at an unspecified time delta k before the actual failure detection. Usually k is pretty small if it’s an instantaneous failure due to development (say, if your code failures), but can also be as large as 15-30 mins if there’s a communications (abbv. comms) failure.

After the detection point, some time is required to figure out what actually happened, and what was the root cause of the issue. For example in a communications hang, you need to determine which node’s network interface card (NIC) is broken. That time is referred to as localization.

After the fix is applied, the training team rekicks the run. But the time from a restart to the first iteration is still significant, primarily due to loading your checkpoint. That time is known as failover.

I’ll list the most common failure points that I recall for the AMD cluster:

Dev Failures: ~70% of failures. The reason we don’t think too much about these is that we don’t burn a lot of time restarting, as long as we have an optimized failover time.

Comms Hangs: ~10% of failures. These are pretty frequent and the localization time roughly is proportional to the log2 (N), where N is the number of nodes in your cluster. One of the most annoying bugs to solve.

Silent Data Corruptions: ~5% of failures. These are extremely common in new GPU/TPU hardware. Essentially, the hardware itself faces a data corruption that no internal sanity check mechanism is able to find. Typically, you should be on the lookout for this by looking for unexpected loss spikes in your experiment tracking infra (Wandb, Aim, etc). These take the most time to localize, and you need someone to actively watch the run cross entropy loss for this.

The cost for this is severalfold. First of all, a loss spike requires the training team to roll back to an earlier checkpoint, meaning all iterations burned post-spike have now gone to waste. If not watched for carefully, detection time can spiral out of control.

Command Queue Errors (CQEs): ~15% of failures. Each GPU receives commands from the CPU’s CUDA driver, and to be executed on the GPU itself. Sometimes, dust in the datacenter’s NIC → Command Queue path (especially if you don’t have fiber optic interconnects!) can prevent the signal from coming up, leading to random failures. The cost and resolution is similar to that of comms failures. In general, the comms failure time is reflective of the broader “binary search” needed to detect any single-node / point anomaly.

Failovers

Now, a critical thing to zoom into is the actual job restart / failover time. In a naive distributed parallel case, which is common for models leveraging data parallelism and expert parallelism, failover time is primarily bound by checkpoint loads. Let’s examine some basic fault tolerance theory to think about how often should we even checkpoint our pretraining state (model weights, optimizer states, and potentially grads). In literature, this is known as the Young/Daly Formula for fault tolerance.

Assume we allow for Tcompute time of training step execution before our checkpointing, which itself takes Tsave time to fully finish. The total time we waste is proportional to Tsave / Tcompute in the long run.

Now, define mean time between failure (MTBF), Tfailure, as the average runtime of the training run. I.e, we can approximate this as MTBF = (total training time) / (number of failures).

Now, our recomputation cost is Tcompute / 2 if we assume that we have a uniform distribution. Why? We make the assumption that a failure can happen with equal likelihood at any point in between that Tcompute window of work, so we can represent the probability of failure as Uniform(0, Tcompute). The expected time of work to re-do is then (Tcompute- 0) / 2 = Tcompute / 2.

The failure rate itself is 1 / Tfailure = number of failure / total training time. So, the total recomputation cost is (1 / Tfailure) (Tcompute / 2) = (Tcompute / (2 * Tfailure)).

To find the optimal Tcompute value, we can set up an objective of L = Tsave / Tcompute+ (Tcompute / (2 * Tfailure)). Minimizing the first derivative to solve for Tcompute gives us Tcompute = sqrt(2 x Tfailure x Tsave).

While this is all classical fault-tolerance theory, what matters for us is that Tcompute = Titer * niter , which essentially says that our compute time is effectively our iteration time per step x the number of iterations.

So our effective niter value — the number of iterations we should do before a save — is

niter = sqrt(2 x Tfailure x Tsave) / Titer

In practice, Tsave is on the order of at least 1 hour for 1024 GPUs, and you can assume that most AI labs follow some similar level of napkin-calculations to set their checkpoint frequency. We can optimize this further by storing the checkpoints in RAM, which brings this down to 5-20 mins. This is a common optimization: in ByteRobust, they checkpoint across multiple nodes’ CPU RAM to do this. Note that you need your nodes to have copious amounts (>500 GB) of RAM to do this!!

The case for resiliency

Hopefully, the last couple sections on the daunting costs-to-pretrain, which worsen broadly with low-efficiency, convince you that cluster resiliency has significant investment-savings potential. Specifically, cluster resiliency automates the localization portion of the failure process, turning failover into the bottleneck versus human negligence (the alternative is to just have someone babysit the run).

Where the ROI is in cluster resiliency that I can share

From the time spent observing Aegis in production, I’d say that ROI comes from a minimal subset of features. Those mainly comprise of these problems, which can be articulated as code / features within cluster resiliency software:

Node Swaps: Once a node has been identified as faulty, can we swap it out with a cache of nodes we know to work?

Run Restarts: On a run failure such as a hang which generally require no additional changes, can we automatically restart the run?

Checkpoint Rollbacks: On the event of a loss spike, can we (1) identify the current checkpoint the run is going through, and (2) appropriately update the pretraining run’s state to a specific value. This is highly critical for SDCS.

One final nugget of information: Unit tests probably don’t help as much. Simulate directly and aggressively through a git fork/branch in the training code; it may seem clunky but it’s better to directly inject error traces in the long run than to have isolated CI tests that may not be representative.

Conclusion / Next Steps

Congratulations! You’ve understood the core failure-related challenges a model training team faces, and the economics behind mitigating the high-risk, high reward situation. To my knowledge, this comprises as a broad introduction. Unfortunately, systems papers on pretraining and fault tolerance are scarce or opaque in details. I struggled to find directly analogous research after weeks of diving on Arxiv, Googling, and asking AI. What you will find are papers describing schemes for subcomponents, mostly related to mitigating failover time from checkpointing.

In hopes of providing a constructive nudge. I’ll suggest a way I would start designing the system if I had to do it all over again: I’d make a very dumb cron job that could simply read logs, and report stack traces. That’ll give you quick signal. From there, scale out to tackle the three features mentioned above.

Good luck on your pretraining journey! Feel free to reach out if you have questions on any of the content here. :D

Resources

https://arxiv.org/html/2511.17127v2

https://arxiv.org/abs/2509.16293

Addendums

PS: The same justification approach can be used to argue for need of a performance engineer. If we compute the cost as a function of the iteration time, we’ll see it significantly drops for even 0.1 seconds of optimization.